Daniel Martin (@etdsoft), creator of Dradis Framework and founder of Security Roots Ltd was interviewed in Episode 11 of PaulDotCom Security Weekly en Espanol.

We talked about Dradis Framework, Ruby, Rails, open-source in general, Dradis Pro, VulnDB HQ, Nokogiri and a number of other things.

The podcast is in Spanish, starts with an introduction by Pauldotcom’s host, Carlos Perez aka “Darkoperator” (@Carlos_Perez) and at about 1m46 the proper interview starts:

[PaulDotCom] How did your interest in security started?

[Daniel Martin] It was some time ago, there’s always been a computer in my house as one of my brothers studied computer science. Our first proper computer was an 8086.

I started on the sysadmin side of thinks, playing with the different Linux distros available back then. When I got in college, I got involved in different linux and electronics student associations. I even gave a bunch of *introduction to linux (training courses to other students.

At the very end of my masters, I was lucky enough to join my university’s Solar Decathlon team. I was tasked with all things geek: from sysadmin, to setting up the wireless links to providing a web interface to the house’s control systems and securing it all.

After that, I went to work in the security industry from day one. First in Spain, for an integrator, soon after that I moved to the UK where I lived for four years. About a year ago I moved back to Spain although I’m still working for NGS Secure in the UK.

[PDC] I know them, they are the creators of NGSSquirrel an some other great auditing tools. As we discussed before, I’ve used their tools in the past and they are very useful. Unfortunately these days, as Director in Tenable I don’t get to spend a lot of time tinkering around with tools and techniques… only in my spare time I get to review tools. the latest attack techniques and keep up with the work in this podcast and my website.

After hearing your story, you reminded me of a question that I’ve always had. These days people that want to get involved in security they jump feet first into it: they start learning C and assembly, or this attack technique or how to write a brute-forcer, how do I do this and how do I do that. However, not too many people take the time to learn about the sysadmin side of things and the tasks involved in day-to-day systems administration. Do you think security courses and degrees should pay more attention to this side of things? Should people that want to get into security develop some sysadmin skills initially?

[DM] For me there are two things that really make a difference on your day-to-day: on one hand there is this aspect of sysadmin you have mentioned (e.g. having compiled your own Linux kernel, creating small shell scripts for sorting out little tasks, etc.), on the other hand having a background in development is also a great asset. You’re always going to have to put together a script or small program because you don’t know what you’re going to be facing. It’s good to know a dash of .Net, Java, Ruby, or whatever. Having this focus on both the sysadmin and development aspects should definitely be pushed.

[PDC] We are on the same page there. One of the first things I usually recommend to people that want to get into security is to try to rewrite some of the very basic tools. You can use whatever you feel like, you can use Bash, Perl, Python… but the important bit is that you write it yourself so you get to learn how it works underneath the hood. Match your tool’s output with the proper one, make packet captures, etc. This forces them to go beyond the script-kiddie approach, the point-and-click mentality of just running XYZ tool and consume its output without understanding how it works.

Dradis Framework and Dradis Professional Edition

Another area that I feel is left a bit unattended is the business side of things. This is one of the things I really like about Dradis which helps a lot in one if the areas that people struggle with which is the organisation of information. A lot of the folks in security suffer from ADD. I can loose my focus very easily. This is even worse now with my daughter running around the house. When I’m getting into _the zone_ and I’m starting to craft my code, my little girl comes knocking at the door or with her Barbie doll that needs a change of clothes or complaining about Netflix not working properly… and I completely lose my train of thought. Thankfully Dradis helps me to get back on track because the information is organised and is well organised in a single location. This is especially handy when the most painful part arrives: writing the report. Dradis provides a nice shorcut to get my reporting done more efficiently.

So, how did the Dradis project start?

[DM] To be honest, it was driven by the sort of thinking you just mentioned. During my day-to-day work as a security consultant I felt that pain: having to organise all the data you are collecting, having to create a thourough report, etc. In addition, when you are working as part of a team you have the additional overhead of managing everyone’s findings, splitting the tasks, figuring out what has been covered already, what is still to be covered, etc. At the time (and this is 2007) there was not a whole lot in terms of collaboration tools to help in managing this process. After putting together the first release of the tool, I presented it to my colleagues and then straight into the open source community [first in SourceForge and these days in GitHub ].

[Audio fades here, but I was explaining about how the over 23,000 downloads, more like 24,000 now, of the open-source package encourage us to carry on working on the tool.]

[PDC|9m43] There is a feature in Dradis that seems to be lacking in some other pentesting tools. Tools like Core, Metasploit or Canvas don’t have this teamwork and collaboration capabilities or modules to gather information from 3rd party tools… this flexibility. Some of them, like Metasploit, are starting to have it, through the GUI you can import and some stuff, although other stuff remains hidden and you need to use the console to get it. Core now integrates with Metasplioit. Canvas still doesn’t let you import from other tools. Dradis fills in this gap in the pentesting and audit market.

[DM] Some of these tools you’re referring to are commercial products that try to be systems not broadly open. They aim to be a catch-all solution for all their customers. In contrast, Dradis as an open-source project thrives with that openness. We try to be as broadly connected as possible, trying to make the most of what other tools in the pentester’s arsenal can provide.

[PDC|11:15] Dradis is written in Ruby, what made you decide Ruby was the best fit?

[DM] Well, to be honest, I was looking into learning Ruby back when the project started. They say it’s always better to have a concrete project to work on when you are learning a new language. If on top of that you put the features that you get out of the box with Ruby on Rails (which was starting to gain popularity) the decision of picking Ruby was an easy one to make.

[PDC|12:07] What license is Dradis distributed under?

[DM] GPLv2

[PDC|12:12] You also have a commercial product called Dradis Pro?

[DM] That’s right, after releasing the tool in 2007 we’ve had a bunch of contributors over the years like Siebert, Rory, Ken and Robin. Today, five years and 23,000 downloads later the story is a bit different. When we first started it was the pentesters and consultants themselves that saw the value in what we were doing. They had the same pain we had and they saw in Dradis a tool to make their lives easier. Over time, the companies these early adopters worked for started to see the benefits of our approach. They realised that each and every single one of their projects had to deal with collaboration, collation of results, etc. and that it would make sense to be systematic about how to approach this problem. They reached out and contacted me to find out whether commercial support was available or whether I could create the custom plugins they needed. This demand is what drove me to start Security Roots and launch Dradis Professional Edition in June 2011.

[PDC|14:08] What is the difference between the Pro edition and the Community edition?

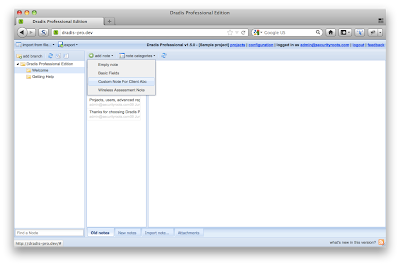

[DM] The main difference is that Pro is tailored to organisations. It is a virtual appliance that is deployed in the company’s network so everyone in the team can access it, create and account and start using it. It is also supports multiple projects: you just log in and choose what project you want to work on today. This allows for several different teams working on different projects at the same time. It’s very easy to upgrade too, so it’s easier to have the latest version installed (as opposed to each person having to upgrade their own version of Dradis every time a new release is shipped).

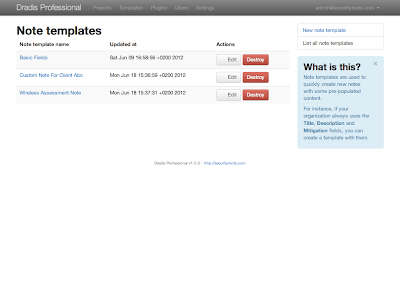

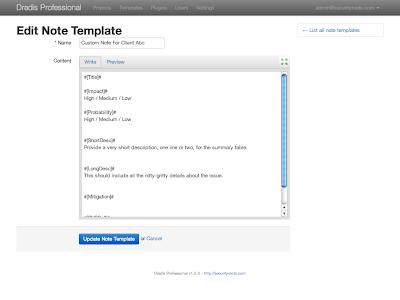

These type of organisation usually pursues a specific goal: they need to create a high-quality deliverable for their clients. In Dradis Pro we have put a lot of effort into making sure that complex reports can be easily generated and customised, even to the point where you can use your existing templates and harness the power of Dradis very easily without having to start from scratch.

[PDC|15:50] One of the features I like the most in Dradis is the ability to import data from external tools like Nikto or Nessus. What other sources of information can be imported into Dradis?

[DM] I think we currently have around 15 import and upload plugins [You’ll here me typing like a monkey to get a precise answer…]. So the list includes: Burp, Nessus, Nmap, NeXpose, Metasploit, OpenVAS, you can also get entries from the OSVDB, Retina Scanner, webapp testing frameworks like w3af and wXf, Zed proxy… there are a lot of them!

[PDC|17:04] Yeah, I can see that. All these are plugins written in Ruby, right? How do you create one of this plugins?

[DM] Yes, these are vanilla Ruby scripts, they are easy to make, some of them have even been contributed by our user base. People give back to the project and sometimes when they create a plugin they need, like the Retina one, they just share it with us.

For people wanting to develop their own plugins we have created a bunch of step-by-step tutorials that can be found in the Dradis Guides site. There are guides on how to create Upload plugins (I believe the example we have is based on our work on the Zed Attack Proxy plugin) that show you how to parse the output produced by a 3rd party tool. There is another one for Import plugins, which are used to query external systems and retrieve the results like what we do with the OSVDB.

[PDC|18:36] I’ve been checking the site because I want to write a plugin for my very own tool, dnsrecon. It does generate output in three different formats: SQlite3, XML and CSV. I wrote a XML and CSV import plugin for Metasploit but Metasploit is not very well suited for import and data manipulation, I thought I should give Dradis a shot because of its friendlier approach to external data manipulation. Anyway, I think I’m going to start writing my own Dradis plugin.

[DM] That sounds great. You can find a lot of good examples already in the source code to read XML. There is also a nice example of reading from a SQLite3 source in the SureCheck plugin. I don’t know if you’re familiar with this tool, but it’s a very handy build review tool, created by another NGS colleague, for Solaris, Linux and Windows.

There is also another way to get your results into Dradis even if you don’t want to create a plugin. You can use the HTTP interface that Dradis provides. We provide a REST API that can be invoked from within your tool to add data to Dradis on the fly. I believe that the fine wXf team have this implemented in their tool.

[PDC|20:45] Oh, I wasn’t aware of that! Sounds very interesting. I may checkout your Metasploit plugin and maybe update it if there is something that can be added.

[DM] Well, that would be very interesting because I think that with their latest changes in the XML-RPC interface our plugin may have broken.

[PDC|21:12] Yes, I think they want you to connect directly to their DB. They have made a few changes. One of the things I things I must admit as a Metasploit community core contributor is that it changes a lot and changes very fast.

[DM] Well, we have suffered something similar with Ruby on Rails which is a library that evolves really fast. Too fast for our liking…

[PDC|21:38] Yes, you mentioning before you found a bug in Ruby on Rails due to the changes they’ve introduced in the newer libraries. Are you guys trying to keep up with the latest stable release of Rails?

[DM] Yes, we just release Dradis v2.9 which features Rails v3.2.0. Unfortunately I believe they released v3.2.1 two days before we shipped and we couldn’t update in time.

The problem is that Rails is a very fast-paced community, they are always innovating and adding features. Recently with the change from v2.x to v3.x they have introduced a lot of new stuff that is not always backwards-compatible. It made sense for us to switch to 3.2 as soon as possible to avoid further issues down the line.

[PDC|22:50] I know what you’re talking about. I still remember when Ruby moved from v1.8 to v1.9. The pain of handling strings, multi-line strings, character set encoding, UTF… These days I just assume v1.9 in my code and if someone comes complaining about it not in 1.8.5 or 1.8.6 I just ask them to move to 1.8.7 (because they already backported much of that stuff). If they don’t want to upgrade to 1.8.7, that’s too bad, but I won’t be adding version-checking code to my tools. Something similar happened for dnsrecon. After writing it in Python v2.6, and even knowing that Google (who employs Guido) hasn’t really moved to Python 3.x, I decided to take the leap myself. I didn’t know what I was getting myself into. What a headache! The code ended up cluttered with version-checking and error-checking code. Definitely not a pleasurable experience.

[DM] For us, the change between Ruby 1.8 and 1.9 is not so painful as Rails takes care of most of the underlying magic that makes it work in both worlds. Nevertheless, this current release (v2.9) is in the last one we are planning to support Ruby 1.8. From now on, we will be 1.9.3 and above.

The other issue, and we haven’t discussed Dradis project goals yet, is something we realised very early on: every single one of our users is going to run Dradis in a different way, in a slightly different platform. This means that a lot of work and a lot of hours go towards ensuring that Dradis is cross-platform. There is a lot of headaches involved in getting Ruby to play nicely on Windows. That would be another pain point for us.

[PDC|25:58] Surely someone is going to say they want to run Dradis using JRuby, MRuby… etc. That’s one of the things we saw in Metasploit. People wanted to make it compatible with JRuby, because JRuby is written in Java which should be faster when you have to manage a lot of information. But then I believe JRuby is only compatible with Ruby 1.8.6… we are walking backwards!

[DM] Thankfully no one has requested JRuby (or any other Ruby VM) compatibility. However, should the day come, the fact that we are hosted on GitHub and integrated with the Travis:CI continuous integration system, should make it easy for us test our code base against different Ruby VMs. Using Travis, there is a way to specify what Ruby VMs you want to run your tests against. We are currently running on v1.8.7, v1.9.2 and v1.9.3 looking into running them just into v1.9.3. If someone requests another Ruby VM, hopefully adding it to the continuous integration engine would do the trick.

[PDC|27:22] So, what are these project goals you were talking about? What is in the tool’s roadmap?

[DM] We are still working on improving the user experience. We need to make Dradis even more user friendly and stable.

We’re also focused on collaboration. A couple of versions ago (in v2.8) we introduced the Smart Refresh: you get instant updates with information modified by other members of your team; when something changes, it is replicated to all the other users on the same project. We want to keep improving that.

We also want to get a cleaner interface, easier to use, easier to get at a glance. Pick up on things like Ajax errors, we need to present them in a better way to prevent data loss. There is still some work on this. We need to be as reliable as Notepad, that you don’t even notice that you’re using, it just gets out of the way.

[PDC|28:57] As an open-source developer, this project looks very healthy. It seems that you guys are doing a great job. I know what it means to develop open-source: long nights, lots of time sacrificed to make it work.

[DM] I have to agree with you, there is a lot of work involved in trying to make successful an open-source project. I remember, back in the day, when reading about open-source project management and how it was very likely that the project would fail and how difficult it was to make it take off… Truth is, there is a lot of work, and it is not always rewarding. I think I mentioned we had about 23,000 downloads, well, just a very small fraction of all those users that have given Dradis a shot at least once decides to get involved. Often times they don’t even bother with giving feedback, making a feature request or fill in a bug in the tracker. For the project contributors, energy comes from people that not only use the project but also go the extra mile and reporting bugs, or come up with new features or ideas that we should implement. This type of feedback is really important and pushes you to carry on working. Thankfully over the last few months we are also improving in this respect. When we moved the repo to GitHub about a year collaboration improved thanks to the features provided by their platform.

[PDC|30:57] I know what you’re talking about. Initially I wrote dnsrecon in Ruby, improving it day after day. Soon afterwards they guys at SecurityTube decided to feature dnsrecon in one of their reviews. I was very excited about it and was eager to find out whether they loved it or hated it… It turned out they hated it: “it fails”, “it doesn’t find this”, etc. I was able to reach out to the author to ask him why he didn’t report any of his findings and he said he didn’t think about it. ‘- But how long were you playing with the tool?’, ‘About two or three months’… I don’t know why he didn’t think about getting in touch, especially considering my email appears every time you run the tool!

I get a similar feeling when going through old code I find a bug that were introduced months ago but I didn’t realise it because in my dev environment it just works fine (because I have that especial tweak that makes this work). Sure people using the tool must be getting errors because they don’t have the tweaks I have in my dev box! Why no body is reporting these errors? Is someone using the tool? I’ve spent some much time to make this tool work and I didn’t receive any feedback…

Thankfully one of the guys that is providing a lot of quality feedback lately is Robin Wood (@digininja), also involved in Dradis development. He’s help was invaluable, specially for the version I ported to Python, apparently they use it a lot at his work [at RandomStorm]. He said, I don’t use Fierce or DNSenum any more, I’m using DNSRecon exclusively. At the very beginning he was submitting bug reports almost every day. For instance, when he mentioned that a certain UK-based host was giving him grief, it turned out the company had their DNS servers misconfigured (they had a SOA record but no NS or A records). This type of thing forced me to look more into DNS implementation in the wild as opposed to the DNS standard and theory which is something very valuable.

Seeing that your tool is being used by others, is what encourages open-source development, even if this results in bug reports (oh no! more bugs), it means that you are helping someone. Even if the bug is filled at 1am you want to deal with it there and then. It kind of makes you proud of your work.

[DM] Definitely. However, if you go down that route you may spend an ever increasing amount of time fixing bugs that should have not made it to the production code to begin with instead of focusing on adding new features and implementing new functionality. We also have people eager to develop their own private Dradis plugins that join our IRC channel [#dradis at irc.freenode.org] and complain because there is not a lot of documentation available.

[PDC|35:00] And you feel like strangling someone…

[DM] Hehe, no, not at all. They are right! However, there is a tremendous amount of ground to cover, a lot of work. I tend to spend a few hours on it every day, the other contributors spend as much time as they can (they are all fairly busy) but there is huge amount of work. It is a juggling act between bug-fixing, pushing new features and writing documentation. There is another side to this, now with Dradis Pro we have a group of users that are using Dradis for real, it is a key part of their business processes a tool they rely upon. That’s also energizing, you know you have to keep improving, adding features and continue to make these people’s lives easier.

VulnDB HQ

[PDC|36:28] So apart from Dradis, there are a bunch of other things you are involved in at the moment…

[DM] That’s right. Most are closely related with this group of Dradis Pro users.

Organisations that understand the value of using Dradis to manage their projects, share information, etc. the next thing to streamline is reporting. In any given security review project you’re likely to find a bunch of bugs that you have discovered previously in other projects or for other clients.

For instance, SQL injection, server config issues or XSS. When the time comes to produce the final deliverable, a lot of what goes into the report could come from what you already wrote in a previous report (not the nitty gritty details, but say, the description of what SQLi is).

Back in the day, our VulnDB product was a platform to manage these type of information chunks. Organisations could have a repository of templates for common issues that they can reuse. If SQLi is found, then copy the standard template, add the instance-specific details of this particular case and be done with it. There are several key advantages to this type of approach: you have all your templates in a single repository that facilitates collaboration and improving upon the existing entries (for example, if a new CSRF paper is published, you can quickly edit your template and add it to the References section of your CSRF entry). This means that you have a single ‘master’ description for all the common issues, something that your team improves over time and gets reused every time you have to produce a report. That saves a substantial amount of time. The alternative is to rely on your memory to remember that months ago there was this project that had a related issue. If you are lucky and remember the project and can find the report, you may be able to reuse some of it. If you aren’t so lucky, you’ll end up writing it from scratch again, and maybe you’ll forget something important (not ideal for the consistency of the deliverables either).

We are now launching VulnDB HQ, a service that enables organisations to manage their repository of vulnerabilities and report entries. It connects with Dradis so you can easily import entries from your repo into Dradis and click ‘Export’ to get a high quality report. Instead of adding a short note like ‘HTTP TRACE is enabled’, you can import the full description, maybe paste in some details and mark the note as ready for the report. This takes about the same time a standard ‘note to self’ would have taken, but goes a long way towards cutting your reporting time.

In VulnDB HQ, in addition to your private repository, you also get access to the Public repository. This Public repo is maintained by us and contains dozens of vuln descriptions already and you can use them (or use them to inspire your own versions). This is very valuable because we take care to provide quality references for our issues that you can also checkout to learn more about them.

[PDC|41:05] Are you also planning to add some delta-reporting type of feature, where users can compare their current results with the results of previous projects? That would be a valuable feature for a manager type if you can provide a dashboard of changes showing the evolution over time for a given set of assets.

[DM] Well, VulnDB HQ is just a platform to manage the entries for your reports. These features you are now describing for comparing results and evolution is more in line with what Dradis Pro is about. In Pro you have different projects with all the details in each case. It should be straightforward to match data from any two projects. I trust that these features will end up being implemented in Pro in the future.

[PDC|43:21] So, when is VulnDB HQ officially launching? That link you sent me said it wasn’t open yet…

[DM] Hopefully by the end of February. We are in private testing at the moment with our Security Roots customers and fine tunning the last few bits and pieces. [We’re now open for business, head on to http://vulndbhq.com/why to find out more]

[PDC|44:03] That’s very interesting. It is amazing how people get passionate about what they love. In your case you have your day job and then Dradis is your second job.

[DM] Hehe, yeah, the job after my job.

[PDC|44:28] So, is there any other project that you are involved in that you want to talk about?

[DM] To be completely honest, my schedule is currently quite full as it is with all these things we’ve been talking about. Between liberating new releases of Dradis Community and Professional and launching VulnDB HQ I’m fairly busy. That of course doesn’t take into account the standard 8h of work of my day job.

[PDC|45:03] He he, definitely. I noticed it when we were arranging this interview, you telling us you hadn’t slept that night and such things. I was wondering whether you had a little baby to take care of or something else to keep you awake… But then I saw on Twitter a couple of days later that the Dradis v2.9 is out I understood what was going on…

[DM] Yeah, the problem has been that v2.9 was ready for a while now but there was this bug that we couldn’t hunt down. Unfortunately it was on a critical component, file uploads, so we had to make it work before releasing v2.9. We also released v1.4 of Dradis Pro also had to wait for Dradis v2.9 to be released. A bit stressful.

Open source, Ruby and Rails

[PDC|46:22] So this was the bug introduced by the Ruby on Rails guys?

[DM] Not quite, It is a bit of a especial case. We realised some time ago that parsing files containing tool output could be very time consuming. Especially if it is a biggish file like a Nessus report. If you try to upload and parse in line while the user is waiting, the experience is not great, it takes too long. To work around this, we have a background process that deals with larger files (>1Mb). The bug was in this worker process. We’re using a fairly old library to manage background tasks. It is fairly old, but there were not a lot of options because we needed our code to be cross-platform (we strive to make Dradis platform-independent). The Rails guys made some changes in v3.2 that broke this library. We had to patch it, but it was a bit of a nightmare.

[PDC|48:28] Yes, I can imagine you throwing “puts” all over the place to get some debug traces…

[DM] Well, that wasn’t even good enough. The exception was thrown within Rails code not our code. The library’s code caused Rails code to choke several layers down the rabbit hole. We had to use ruby-debug to go down the call stack to find out the culprit and figure out what was going on.

[PDC|49:15] Yup, sounds like a real nightmare. No wonder you didn’t get any sleep… You started me thinking, I’m using the Nokogiri library and I was wondering would it work in Windows?

[DM] Yes, thankfully it does.

[PDC|49:32] I’m asking because I remember when I created DNS Recon importer that I used the REXML library shipped with Ruby. It was slooooooow. I tried a PTR scan of 300,000 and it took aver one hour and 4 gigs of RAM. Then I learned about Nokogiri and rewrote my parsers and was able to cut it down to 3 or 4 megs of RAM and a fraction of the time. Trust me, I’ve suffered the pain of XML parsing!

Would it be an issue if I write my plugin using Nokogiri?

[DM] Not at all. Conveniently enough the step-by-step guide we have explains how to use Nokogiri efficiently in your plugins.

[PDC|51:09] Since I already wrote the plugin for Metasploit in Ruby, it should be easy.

[DM] Definately. Actually in v2.9 we’ve revamped the Nessus, Nmap and Nikto plugins to use Nokogiri instead of REXML. It is a lot faster. We benchmarked the Nessus plugin and the new one is 60x faster than the old one.

[PDC|51:38] Wow… People, anyone using an older version Dradis please update!

[DM] Yup

[PDC|51:44] I miss the 90s when everything they teach you at uni was how to use flat files, and not this XML nonesense…

[DM] It is a matter of finding the right tool. Parsing XML with Nokogiri is a different story. Very simple.

[PDC|52:22] But documentation isn’t great… you get a list of methods, but that’s about it. No return values, not much info…

[DM] Hopefully you’ll be able to use the code in some of our existing plugins to help you there.

[PDC|52:52] With that an Ruby’s IRB (Interactive Ruby shell) that should be easy. Going call-for-call with irb makes your life a lot easier.

[DM] Now that you bring it up, people are usually not aware of the powerful console that ships with Rails. It is an irb environment with all the application preloaded. It is an IRB session that lets you query your Dradis repository, you can check your Notes, Categories, Attachments, anything! It’s pretty powerful, I use it on a daily basis. Unfortunately it isn’t very well documented either [although we’re getting there: Dradis Development 101]. It’s a great debugging tool. That’s a protip right there.

[PDC|54:17] Since this is turning into to a development episode, what’s your favourite editor: vim or emacs?

[DM] Vim

[PDC|54:32] For some reason, I got a flashback to the FOSS-weekly podcast where they always ask the favourite editor question 🙂 I’m looking forward to Matz’s next release of his Ruby interpreter (MRI). I saw in Rubycon he said he was jealous of Lua and running scripts in embedded devices like Android phones. He’s working on an ultra-fast Ruby implementation for those environments.

I know Ruby is a great community, I’ve never looked into Ruby on Rails, but after talking to you I think I’m going to give it a shot.

[DM] You are right, even considering all the issues we had, Rails makes your life a lot easier. You get a lot out of the box.

[PDC|56:10] Daniel, thanks again for your joining us in this new edition of Pauldotcom en Espanol, it’s been a pleasure having you. Dradis is a tool I use quite a lot. I’m going to make sure that I include in the show notes all the links you provided. I’d like to invite everyone to give it a shot, but refrain from trying to run it in weird environments like HP-UX or AIX 😉

[DM] Well, if someone tries it in those platforms, please make sure to let us know how it goes. And remember that any feature requests are also more than welcomed.

Thanks for having me in your show and for the opportunity to discuss our project and reach a broader audience. It’s been a pleasure.